Ateme provides solutions that deliver a higher Quality of Experience to viewers. But what exactly do we mean by Quality of Experience? And what technology is required to deliver it? Read on to find out and discover the jargon around Quality of Experience.

4K

4K is a shorthand notation for digital image or video resolutions that measure 3840 pixels in width by 2160 pixels in height (3840×2160). The term is therefore an approximation of the width of the image in pixels (4000 or 4 kilo pixels using the metric system’s prefix for 1000). As such, a 4K TV or camera supports a maximum resolution of 3840×2160. The term is sometimes used as a synonym for UHDTV (or Ultra High Definition TV), although technically it is only one component of this.

8K

8K is a shorthand notation for digital image or video resolutions that measure 7680 pixels in width by 4320 pixels in height (7680×4320). The term is therefore an approximation of the width of the image in pixels (8000 or 8 kilo pixels using the metric system’s prefix for 1000). As such, an 8K TV or camera supports a maximum resolution of 7680×4320. 8K has four times as many pixels as 4K (3840×2160).

AC-4

AC-4 is the name of an audio coding format developed by Dolby. AC-4 improves on the compression efficiency of previous Dolby formats (such as AC-3 and Enhanced AC-3). It supports conventional channel-based encodings, including 5.1 surround sound as well as 7.1.4 configurations which include ceiling speakers. It also has extensive support for object-based coding, where each sound source, regardless of its position in the scene, is encoded independently. AC-4 has been adopted as one of the audio formats supported in the new ATSC 3.0 TV standard.

ATSC 3.0

ATSC 3.0 is the fourth and latest standard for terrestrial (over-the-air) television in North America. It was finalized in 2017 and first launched in South Korea that same year. Like its predecessor, ATSC 1.0, ATSC 3.0 is a digital standard (video, audio and data are represented and transported digitally), but that is where the similarities end. ATSC 3.0 uses more efficient encoding and transport technologies. It combines over-the-air broadcasts with broadband distribution to enable personalized content, targeted advertising, and interactive services – retaining both the efficiency of broadcast distribution and the flexibility offered by two-way broadband connections. Support for higher resolutions, greater picture contrast, deeper shades of color and immersive audio greatly enhance the user experience. These enhancements allow for new revenue models (such as premium services, transactional services as well as greater ad revenues due to better targeting).

DD+

Dolby Digital Plus (DD+) is the name of an audio coding format developed by Dolby. It is also known as Enhanced AC-3 (E-AC-3). As suggested by this latter name, it has a number of improvements over AC-3, including support for more channels, as well as a wider range of bitrates. DD+ is widely supported in the production and distribution of professionally produced multimedia content.

Dolby Atmos

Atmos is a technology developed by Dolby to enable immersive or 3D audio. Traditional surround sound is enhanced with the addition of ‘height speakers’ – that is, speakers that emulate sound coming from above. The end user is thus immersed in a 3D sound field – particularly when a 7.1.4 configuration is used (three front speakers, two side speakers, two rear speakers, four ceiling speakers and the subwoofer). Atmos also supports the concept of ‘audio objects’. Instead of encoding sound as different channels, each audio source is encoded individually and then placed in a sound field. This allows customized mixing of sound sources by end users. It also allows interactive experiences (such as AR and VR) where the location of the sound source is adapted to the head motion of the end user.

DD+JOC

The term Joint Object Coding (JOC) is used in conjunction with Dolby Digital Plus (DD+JOC). DD+JOC streams combine a legacy 5.1 channel DD+ stream with sideband metadata to allow reconstruction of a Dolby Atmos mix. Legacy decoders can ignore the metadata and render traditional 5.1 audio while Atmos capable decoders can provide the enhanced immersive experience enabled by Atmos.

HDR

High Dynamic Range (HDR) refers to the greater ability of new displays and screens to represent a much wider range of brightness levels. Classic TVs and monitors based on cathode ray tube technology could typically represent brightness in the range of 1 to 100 Candelas/m2 (or nits, as they are more informally known). This range – now referred to as Standard Dynamic Range (SDR) – is orders of magnitude smaller than what the human visual system is capable of perceiving. Displays based on newer technologies such as OLED, Quantum Dot (QD) and QD-LED, achieve a range of 0.1 to 1000 nits and beyond. This results in a more vivid rendition of the scene, but also less risk of content looking washed out in bright areas or difficult to see in dark areas.

HFR

High Frame Rate (HFR) refers to video frame rates that are greater than the traditional frame rates used in movies (24 fps), European broadcast television (25 fps) and North American broadcast television (roughly 30 fps). Typical HFR values are 50 fps (Europe) and 60 fps (US) although, in recent years, there have been demonstrations of 100/120 fps video distribution for sports events.

Low latency

One of the challenges of OTT streaming is that the streaming protocols used to deliver video content to a multiscreen environment also add some latency to the video stream. This means that viewers will see a live event 30 or 40 seconds after it actually happened. As traditional video-delivery technologies (terrestrial, satellite, IPTV) deliver with much lower latency (typically 5 to 10 seconds), this could create a negative spoiler effect. The aim of low latency streaming is to update the streaming protocols specifications (also relying on CMAF) in order to achieve the same latency in OTT as in traditional video delivery (5 to 10 seconds).

MPEG-H

MPEG-H is an ‘umbrella standard’ developed by the Motion Pictures Expert Group (MPEG) and first published in 2013. It encompasses numerous individual standards, such as the MPEG Media Transport (MMT) for carriage of audio, video, and data (MPEG-H Part 1), HEVC for encoding video (MPEG-H Part 2), and a new compression standard for encoding immersive and object-based audio (MPEG-H Part 3). MPEG-H technologies were slow to gain traction, but now HEVC has become the de facto standard for encoding 4K and UHD video, in both traditional TV broadcasts and OTT services. Also, MMT and MPEG-H audio have been selected for inclusion in both the ATSC 3.0 and the TV 3.0 standards.

NGA

NGA stands for Next Generation Audio. It refers to new audio formats and technologies that enable audio experiences beyond merely 5.1 surround sound. These include immersive or 3-D audio (typically a 7.1.4 channel setup which includes 4 ceiling channels); dialog enhancement abilities, which enable end users to change the mix between different sound sources (such as commentator and arena sounds in a sports broadcast); and object-based audio renditions, which enable consumers to engage in interactive VR or AR activities where the position of sound sources adapts to user head movements.

Quality of Experience (QoE)

Quality of Experience (QoE), in the context of this glossary, refers to the overall level of satisfaction of end users with the technical aspects of their media and entertainment experience. This includes picture and sound quality, availability of captions or subtitles, proper synchronization amongst video, audio and captions, responsiveness to channel changes or Fast Forward and Rewind, start-up time speed for VOD content, latency for live events, etc. We are not including user satisfaction with the actual content, or issues with billing, prices or customer service from the service provider.

TV 3.0

TV 3.0 is the latest standard for terrestrial (over-the-air) television in Brazil. It shares many of the same design goals and technologies as ATSC 3.0, its counterpart in North America. These include enhanced user experience through higher resolutions, High Dynamic Range and Wide Color Gamut, and NextGen Audio; interactivity and targeted advertising by combining over-the-air and broadband distribution; and more efficient use of the available spectrum by incorporating the latest video and audio compression standards. Final technology recommendations were made in January 2022 and the system is scheduled to launch in 2024.

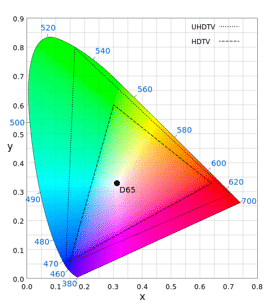

Wide Color Gamut (WCG)

Wide Color Gamut (WCG) refers to the greater ability of new displays and screens to represent a much wider and richer range of colors. Classic TVs and monitors based on cathode ray tube technology were only able to represent a fraction of the colors perceivable by humans, often resulting in colors that looked washed out. Displays based on newer technologies such as OLED, Quantum Dot (QD) and QD-LED can represent a wider set of colors, resulting in much deeper shades of color. Renditions, therefore, appear much more vivid and life-like.

The figure shows the range of colors displayed by earlier TVs (smaller triangle), the wide color gamut available in UHDTVs (larger triangle) and the full range of colors that humans can perceive (large conic shape).